Cooling Embedded AI Electronics

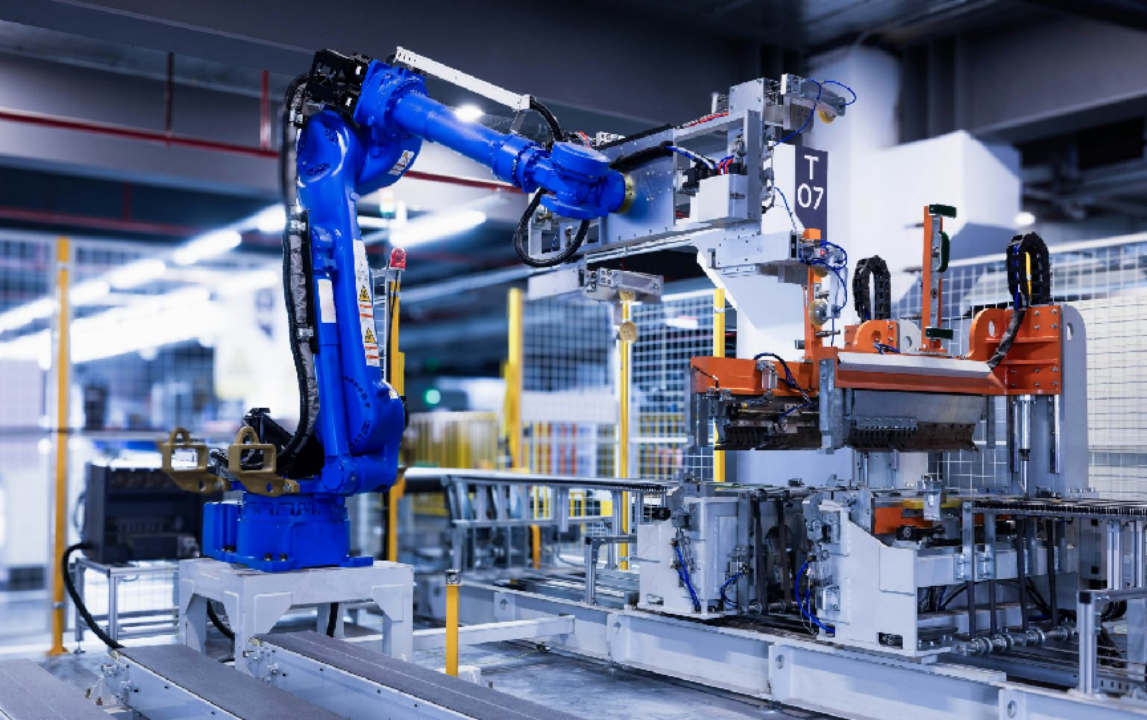

Embedded AI enables dedicated functions within larger systems. These AI chips power countless devices—robotic arms, smart thermostats, security cameras, medical instruments, drones, and vehicles—enhancing functionality and decision-making at the edge.

ChatGPT is one of the most visited websites in the world. Along with Gemini, Perplexity AI, Grok, and many others, online AI tools are increasingly popular and specialized. This is leading to more power-hungry AI data centers, where hundreds of thousands of GPU chips run at upwards of 1,000 watts each.

But millions of lower power AI chips are running quietly in edge applications all around us.

In smart homes, embedded AI powers thermostats, voice/image recognition, and security. In factories, it drives automated quality control, predictive maintenance, and robotic assembly.

Using local AI inference, these systems make independent decisions, predict outcomes, and automate operations in real time. Connected via the Internet of Things (IoT), they share data and improve interoperability, making homes and factories smarter and more efficient.

Using local AI inference, these systems make independent decisions, predict outcomes, and automate operations in real time. Connected via the Internet of Things (IoT), they share data and improve interoperability, making homes and factories smarter and more efficient.

AI Technologies in Embedded Systems

AI vs. ML: Artificial Intelligence (AI) includes deep learning that uses artificial neural networks to process unstructured data. Machine learning (ML), a subset of AI, focuses on training algorithms to learn from data and adapt over time.

Discriminative AI: Embedded systems typically use discriminative AI—optimized for data analysis and evaluation—requiring lower compute power than generative models.

Embedded AI Chips and Cooling Needs

AI processors and modules in embedded applications are not the high-powered versions in data centers. For those, liquid cooling with constant monitoring is essential.

Intel FPGAs Support Real-Time Deep Learning Inference for Embedded Systems and Data Centers.

Embedded AI processors often come in compact system-on-module (SOM) formats that include CPUs, memory, and specialized chips like GPUs or DSPs. These modules prioritize space efficiency and typically rely on air cooling—either passive or fan-assisted—rather than the liquid cooling found in high-wattage data centers.

When Air Cooling Isn’t Enough

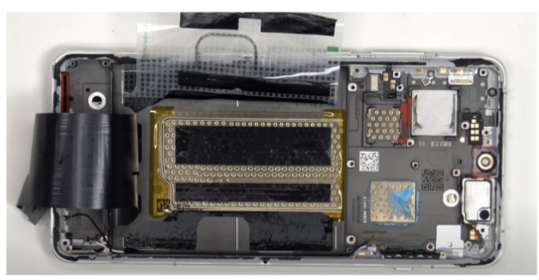

One exception to air cooling for embedded processors is in some smart phones. Tasked to perform ever more functions, including AI, their increasingly powerful chips require higher performance cooling.

For example, Qualcomm Snapdragon 8-series chips, used in phones like the OnePlus 13, generate significant heat under heavy loads. Vapor chambers help dissipate that heat across a broader surface for effective cooling without active fans.

The Top-Rated OnePlus 13 Phone Features a Qualcomm Snapdragon 8 Elite Chip. Botton: A Teardown Video Reveals the Vapor Chamber for Cooling the Snapdragon Chip.

Embedded AI Efficiency

Embedded AI continues to gain ground due to its compact design, low latency, and localized processing. Its benefits include:

Reduced network load by transmitting processed insights rather than raw data

Lower system cost vs. cloud-based AI

Lower power consumption, enabling simpler and cheaper cooling solutions

With AI now embedded across sectors—from smart homes to drones to industrial robotics—thermal management solutions are evolving alongside to ensure performance and longevity.

中文

中文

.png) Search

Search

>

>  Return to List

Return to List